My former colleague (and frequent host for good beer at events) Stephen O’Grady of RedMonk has written a typically smart piece titled “What is the Atomic Unit of Computing?” which makes some important points.

However, on one particular point I’d like to share a somewhat different perspective in the context of my cloud work at Red Hat. He makes that point when he writes: "Perhaps more importantly, however, there are two larger industry shifts at work which ease the adoption of container technologies… More specific to containers specifically, however, is the steady erosion in the importance of the operating system."

It’s not the operating system that’s becoming less important even as it continues to evolve. It’s the individual operating system instance that’s been configured, tuned, integrated, and ultimately married to a single application that is becoming less so.

First of all, let me say that any differences in perspective are probably in part a matter of semantics and perspective. For example, Stephen goes on to write about how PaaS abstracts the application from the operating system running underneath. No quibbles there. There is absolutely an ongoing abstraction of the operating system; we’re moving away from the handcrafted and hardcoded operating instances that accompanied each application instance—just as we previously moved away from operating system instances lovingly crafted for each individual server. Stephen goes on to write—and I also fully agree—that "If applications are heavily operating system dependent and you run a mix of operating systems, containers will be problematic.” Clearly one of the trends that makes containers interesting today in a way that they were not (beyond a niche) a decade ago is the wholesale shift from pet operating systems to cattle operating systems.

But—and here’s where I take some exception to the “erosion in the importance” phrase—the operating system is still there and it’s still providing the framework for all the containers sitting above it. In the case of a containerized operating system, the OS arguably plays an even greater role than in the case of hardware server virtualization where that host was a hypervisor. (Of course, in the case of KVM for example, the hypervisor makes use of the OS for the OS-like functions that it needs, but there’s nothing inherent in the hypervisor architecture requiring that.)

In other words, the operating system matters more than ever. It’s just that you’re using a standard base image across all of your applications rather than taking that standard base image and tweaking it for each individual one. All the security hardening, performance tuning, reliability engineering, and certifications that apply to the virtualized world still apply in the containerized one.

To Stephen's broader point, we’re moving toward an architecture in which (the minimum set of) dependencies are packaged with the application rather than bundled as part of a complete operating system image. We’re also moving toward a future in which the OS explicitly deals with multi-host applications, serving as an orchestrator and scheduler for them. This includes modeling the app across multiple hosts and containers and providing the services and APIs to place the apps onto the appropriate resources.

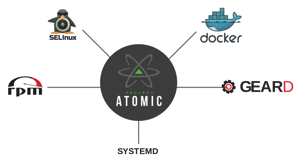

Project Atomic is a community for the technology behind optimized container hosts; it is also designed to feed requirements back into the respective upstream communities. By leaving the downstream release of Atomic Hosts to the Fedora community, CentOS community and Red Hat, Project Atomic can focus on driving technology innovation. This strategy encompasses containerized application delivery for the open hybrid cloud, including portability across bare metal systems, virtual machines and private and public clouds. Related is Red Hat's recently announced collaboration with Kubernetes to orchestrate Docker containers at scale.

I note at this point that the general concept of portably packaging applications is nothing particularly new. Throughout the aughts, as an industry analyst I spent a fair bit of time writing research notes about the various virtualization and partitioning technologies available at the time. One such set of techs was “application virtualization.” The term governed a fair bit of ground but included products such as one from Trigence which dealt with the problem of conflicting libraries in Windows apps (“DLL hell” if you recall). As a category, application virtualization remained something of a niche but it’s been re-imagined of late.

On the client, application virtualization has effectively been reborn as the app store as I wrote about in 2012. And today, Docker in particular is effectively layering on top of operating system virtualization (aka containers) to create something which looks an awful lot like what application virtualization was intended to accomplish. As my colleague Matt Hicks writes:

Docker is a Linux Container technology that introduced a well thought-out API for interacting with containers and a layered image format that defined how to introduce content into a container. It is an impressive combination and an open source ecosystem building around both the images and the Docker API. With Docker, developers now have an easy way to leverage a vast and growing amount of technology runtimes for their applications. A simple 'docker pull' and they can be running a Java stack, Ruby stack or Python stack very quickly.

There are other pieces as well. Today, OpenShift (Red Hat’s PaaS) applications run across multiple containers, distributed across different container hosts. As we began integrating OpenShift with Docker, the OpenShift Origin GearD project was created to tackle issues like Docker container wiring, orchestration and management via systems. Kubernetes builds on this work as described earlier.

Add it all together and applications become much more adaptable, much more mobile, much more distributed, and much more lightweight. But they’re still running on something. And that something is an operating system.

[Update: 8-14-2014. Updated and clarified the description of Project Atomic and its relationship to Linux distributions.]

No comments:

Post a Comment